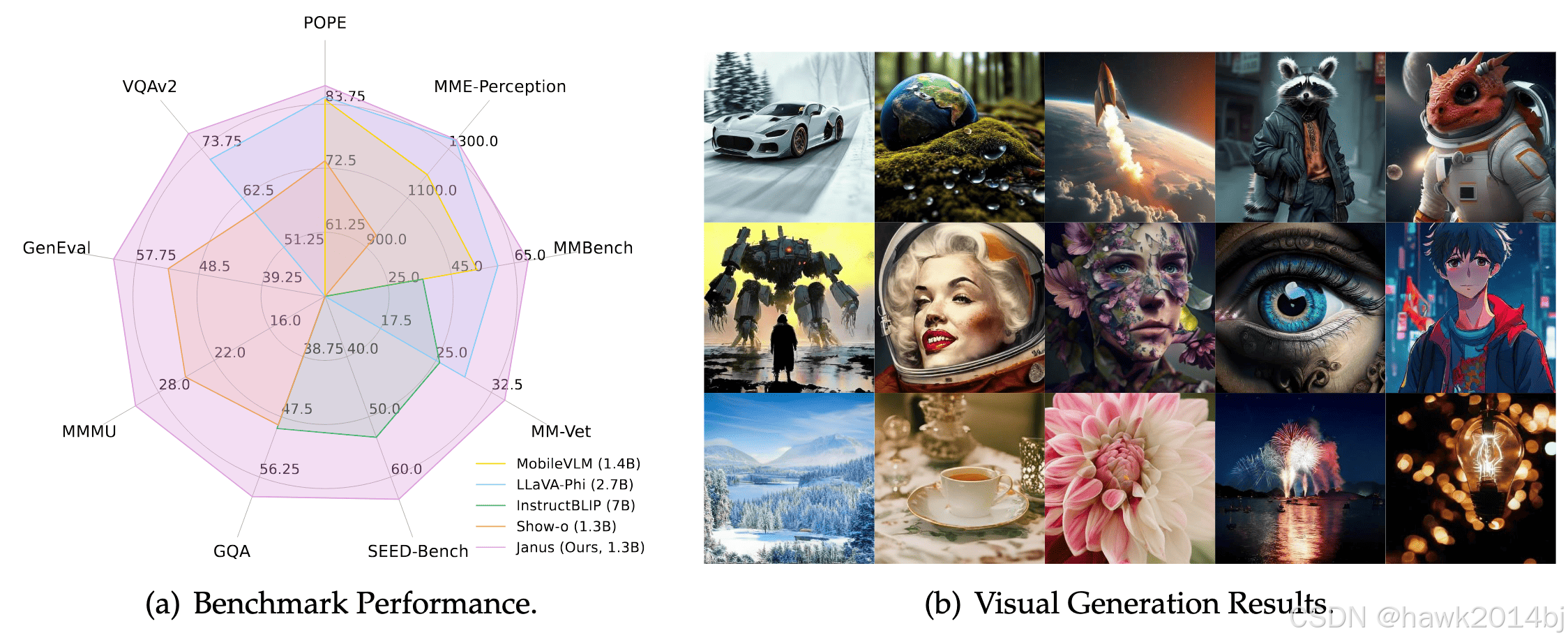

Deepseek 发布了 Janus 1.3B 多模型小模型,本文将使用 Aliyun 的 PAI 环境测试该模型,看看模型的效果如何:

登录 DSW 登录,并启动环境,Aliyun 首次给三个月免费额度,5000CU。

下载代码并安装

!git clone https://github.com/deepseek-ai/Janus

cd Janus

pip install -e .

运行测试代码

通过提示词生成图片:

import os

import PIL.Image

import torch

import numpy as np

from transformers import AutoModelForCausalLM

from janus.models import MultiModalityCausalLM, VLChatProcessor# specify the path to the modelvl_chat_processor: VLChatProcessor = VLChatProcessor.from_pretrained(model_path)

tokenizer = vl_chat_processor.tokenizervl_gpt: MultiModalityCausalLM = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True

)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()conversation = [{"role": "User","content": "A stunning princess from kabul in red, white traditional clothing, blue eyes, brown hair",},{"role": "Assistant", "content": ""},

]sft_format = vl_chat_processor.apply_sft_template_for_multi_turn_prompts(conversations=conversation,sft_format=vl_chat_processor.sft_format,system_prompt="",

)

prompt = sft_format + vl_chat_processor.image_start_tag@torch.inference_mode()

def generate(mmgpt: MultiModalityCausalLM,vl_chat_processor: VLChatProcessor,prompt: str,temperature: float = 1,parallel_size: int = 16,cfg_weight: float = 5,image_token_num_per_image: int = 576,img_size: int = 384,patch_size: int = 16,

):input_ids = vl_chat_processor.tokenizer.encode(prompt)input_ids = torch.LongTensor(input_ids)tokens = torch.zeros((parallel_size*2, len(input_ids)), dtype=torch.int).cuda()for i in range(parallel_size*2):tokens[i, :] = input_idsif i % 2 != 0:tokens[i, 1:-1] = vl_chat_processor.pad_idinputs_embeds = mmgpt.language_model.get_input_embeddings()(tokens)generated_tokens = torch.zeros((parallel_size, image_token_num_per_image), dtype=torch.int).cuda()for i in range(image_token_num_per_image):outputs = mmgpt.language_model.model(inputs_embeds=inputs_embeds, use_cache=True, past_key_values=outputs.past_key_values if i != 0 else None)hidden_states = outputs.last_hidden_statelogits = mmgpt.gen_head(hidden_states[:, -1, :])logit_cond = logits[0::2, :]logit_uncond = logits[1::2, :]logits = logit_uncond + cfg_weight * (logit_cond-logit_uncond)probs = torch.softmax(logits / temperature, dim=-1)next_token = torch.multinomial(probs, num_samples=1)generated_tokens[:, i] = next_token.squeeze(dim=-1)next_token = torch.cat([next_token.unsqueeze(dim=1), next_token.unsqueeze(dim=1)], dim=1).view(-1)img_embeds = mmgpt.prepare_gen_img_embeds(next_token)inputs_embeds = img_embeds.unsqueeze(dim=1)dec = mmgpt.gen_vision_model.decode_code(generated_tokens.to(dtype=torch.int), shape=[parallel_size, 8, img_size//patch_size, img_size//patch_size])dec = dec.to(torch.float32).cpu().numpy().transpose(0, 2, 3, 1)dec = np.clip((dec + 1) / 2 * 255, 0, 255)visual_img = np.zeros((parallel_size, img_size, img_size, 3), dtype=np.uint8)visual_img[:, :, :] = decos.makedirs('generated_samples', exist_ok=True)for i in range(parallel_size):save_path = os.path.join('generated_samples', "img_{}.jpg".format(i))PIL.Image.fromarray(visual_img[i]).save(save_path)generate(vl_gpt,vl_chat_processor,prompt,

)显示图片

显示生成的图片

cd generated_samples

from IPython.display import Image, display

url = "./img_0.jpg"

display(Image(filename=url))

总结

模型生成的图片效果不是很好,可能是参数较少的原因,如果要求不高还是可以使用的。